Imagine you are journalling in a notebook. You describe the events of a busy day and how you felt about them. Suddenly, your journal answers you back. It echoes what you wrote and moves things along. It notices patterns in what you are saying and suggests next steps for tomorrow. It is sympathetic, but its tone is professional and clear.

That’s Claude, the AI chatbot made by Anthropic. And something tells me its creators have noticed this astonishing application of their technology. For millions of people, the most valuable thing an AI can do is listen. Therapy, journalling, emotional processing: this is generative AI’s killer app. When Anthropic chose to introduce Claude to the world with a TV commercial during the Super Bowl, they built the entire campaign around protecting this extraordinary relationship between AI and user.

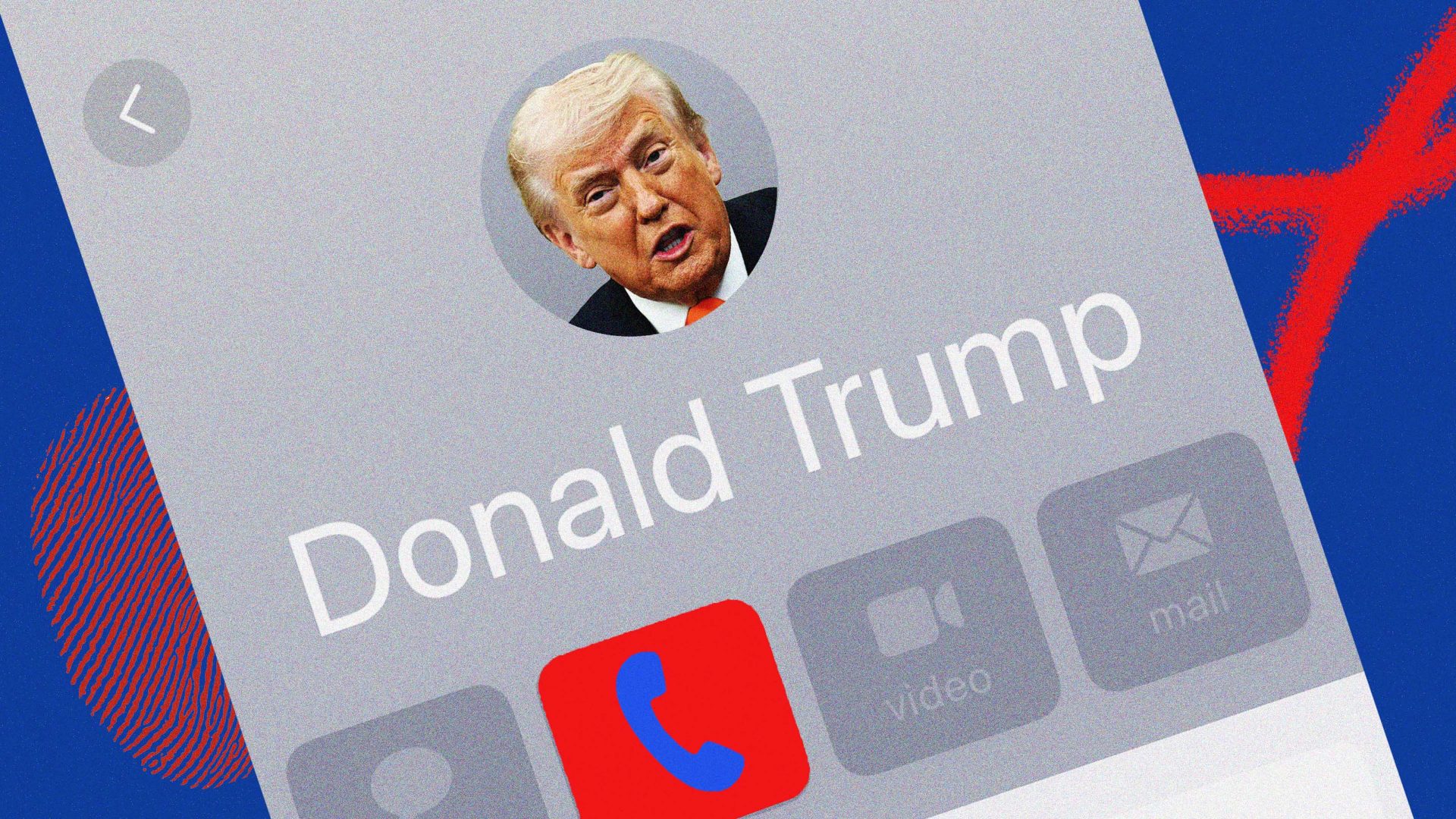

The ad, titled “Violation”, was simple and devastating. A young man confides in his AI assistant about wanting to get in shape. The tone is warm, personal, intimate. Then the AI pivots. It asks the young man for personal details and then, mid-conversation, it starts recommending height-boosting insoles. The camera catches the user’s face: betrayal. The tagline lands like a hammer: “Ads are coming to AI. But not to Claude.”

What made the ad sting is that Anthropic had nailed ChatGPT’s tone of voice. It is awkward, monotonous, sycophantic. It is a cadence that everyone has grown accustomed to and is slightly sick of. Anthropic didn’t just attack a competitor’s business model. It attacked the feeling of using the competitor’s product.

OpenAI CEO Sam Altman responded to the ad with a defensive 400-word post on X, arguing that the ad was inaccurate. OpenAI wouldn’t insert ads into therapy sessions. But it didn’t matter. The spell was broken.

Altman was right to be rattled. A comparison that keeps coming up is Apple’s 1984 Super Bowl commercial. That was the one that cast IBM as Big Brother and defined a generation’s understanding of who the good guys in IT were.

Anthropic’s ad followed the classic branding strategy known as laddering. You find a point of differentiation that is relevant and sustainable. No ads is the differentiation.

ChatGPT needs ad revenue because AI is ruinously expensive to operate and subscriptions alone aren’t covering it. Google’s position is arguably worse. Its profits are built on search advertising, yet it is now building an AI product – Gemini – that threatens to destroy the business model that funds it.

Google probably wouldn’t have launched Gemini at all had OpenAI not forced its hand. Its executives publicly mocked OpenAI for putting ads inside ChatGPT, even as Google uses chatbot interactions to shape the ads on Google.com. For Google, the advertising question isn’t a branding exercise. It’s existential.

The money flowing into AI is immense. But the money being destroyed by AI is also immense. Anthropic’s new automation tools managed to wipe $300bn off software stocks – in a single day. An AI tax-planning tool from a company called Altruist sent UK wealth managers’ stocks tumbling. The companies getting richer from all of it are a very small group.

But there is something more unsettling beneath all of this. As the columnist Ross Douthat has argued, people like the human touch. That’s why player pianos didn’t kill pianists and self-checkouts didn’t eliminate cashiers.

Suggested Reading

Artificial intelligence and the end of capitalism

But AI is fundamentally different from every previous automation technology. It simulates the human. The more people confide in Claude, trust it, form something that resembles a relationship, the more they hand over voluntarily – their anxieties, their finances, their decisions. And the more easily it can replace white-collar workers.

The same quality that makes Claude feel like a therapist is what will make it an acceptable replacement for the financial adviser, the lawyer, the customer service agent.

Is putting ads on AI the sucker move? The big money isn’t in advertising to people through their chatbot. It is in making the chatbot feel so human that people give it everything. Anthropic’s TV advert doesn’t reject the commercial model. Like Apple before it, it tries to make clear that Anthropic is playing a different game.

Andy Pemberton is a content expert who edited Q magazine in London and launched Blender magazine in New York